WE are all perhaps familiar with the fictionalised account of a pizza delivery service interacting with a potential customer. Through the course of the conversation, the server reveals an awareness of the customer’s medical condition, financial status, legal misdemeanours, and more. This situation, which was at one time seen as a joke or a frivolous exaggeration, is now strikingly close to our lived experiences. As a recent RTI request has revealed, the Modi government’s proposed “National Social Registry” is designed to do an all-round surveillance of each and every Indian, via the Aadhar system. Data which was previously collected by various government bodies for specific governance-related purposes and located in separate silos will now be brought together in a centralised manner using the unique Aadhar number. Computational tools and algorithms will now be used on this centralised data in an extensive manner.

All sorts of personal data – related to births, deaths, marriages, travel, migrations, address changes and financial status – will not just be collected to create a profile of individuals, it will serve as a key policy input for governments. The government claims that this is to enhance the performance of state-funded projects targeted at the poor and the marginalised; it seeks to “dynamically” record and verify the status of people below the poverty level who are beneficiaries of various projects. This however is a specious claim, since each and every citizen of this country is under scrutiny. This is a clear a violation of a right to privacy, which the Supreme Court has argued is a fundamental right, because it fails the proportionality test and subjects everyone to a breach of privacy of massive proportions. So how does the Modi government plan to circumvent this? Through amending the Aadhar Act in order to allow for a complete dismantling of the Supreme Court’s right to privacy judgement.

Fears of gross violations of individual rights through the proposed social registry have been expressed by none other than Manoranjan Kumar, a bureaucrat who was one of its loudest proponents at one time. Kumar has been deeply involved in discussions and preparations around this registry since 2015 when it was first mooted. His enthusiasm has now given way to profound scepticism, and he has warned that computer algorithms can (and will, by design) be used to do all kinds of searches across various databases to profile individuals and communities. This, as we can well guess, is a recipe for disaster.

What we are now seeing is the latest, and by far the strongest form of the panopticon in human history, powered by technological tools and being put in place by political regimes. Constant collection of information after all provides the basis for a regime of control and discipline. The question for us is: what exactly is the nature of the modern panopticon, propelled by digital technologies? Our personal data is today the very fulcrum of a significant proportion of private business ventures. Digital technologies actively and silently record and process every instance of our lives. Detailed profiles are prepared, and this information is transformed into usable services which are now an integral part of our lives. While private businesses use our data, often without our explicit consent, governments are empowered to collect and use vast amounts of personal data. Sweeping powers make for a regime of anticipatory surveillance, where data can be collected and processed without having to cite a specific investigation. This point has been eloquently reiterated most recently in Edward Snowden’s autobiography, Permanent Record. Snowden speaks of how technological tools and regulatory mechanisms have colluded to create an extensive system of mass surveillance.

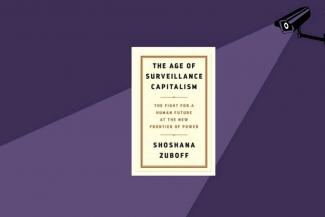

These concerns have been reflected in India. Since its inception in 2009, the Aadhar project has given an additional momentum to existing concerns of governmental overreach and the loss of privacy. Several other initiatives are equally worrying. India has proposed a centralised telecom interception system to automate eavesdropping on conversations. The Modi government also plans mass surveillance of private conversations and posts on social media. In response to this, we have been urged to look for specific rights (such as the “right to explanation”, the “right to erasure” and the “right to correction” detailed in a previous piece carried by Liberation). Consent and privacy have been the pivots around which any discussion of surveillance and data privacy takes place. It is in this context that Shoshana Zuboff’s The Age of Surveillance Capitalism: The Fight for the Future at the New Frontier of Power needs to be read for its description of the nature of modern surveillance systems.

Tracing the Contours of Surveillance Capitalism

“Demanding privacy from surveillance capitalists or lobbying for an end to commercial surveillance is like asking…a giraffe to shorten its neck”

Zuboff characterises the current economic model as “surveillance capitalism,” where human experience is a raw material to be extracted and used to predict intentions, in order to produce and sell more goods and services. It crucially relies on new computing tools such as machine learning to exist. For example, personal data can be processed and converted into an application that is used by insurance companies to decide on the creditworthiness of its clients. It can be used to develop an application to help a car owner find an empty parking lot, or the least congested route to her destination. Zuboff’s central argument is that this regime poses a specific challenge because of its tumultuous impact on the very concepts of consent and privacy.

A key feature leading to the development of surveillance capitalism out of earlier models of capitalism is what Zuboff calls the discovery of “behavioural surplus”: the surplus value generated in mining enormous amounts of personal data and converting it into a marketable product. This surplus becomes available to corporations for uses beyond service improvement and its only purpose is to ensure exponential profits. The rush to increase behavioural surplus and thus ensure continuing profits leads corporations to move inexorably towards systems that not just infer personal behaviour, but are able to predict it with increasing accuracy. This is made possible through a continuous expansion of data that feeds into the prediction process and the use of computational tools. We are now seeing “permissionless innovation”; a unilateral seizure of rights over data without consent in order to cater to these new needs. Data is continuously extracted, behaviour is predicted, and user experience is personalised and customised.

The quest for behavioural surplus has moved to the offline world. Companies now track every moment of our daily lives in the physical world through smart-home devices, wearables, and applications such as Google Maps. Even human emotions are harnessed by computational methods that identify sentiments from textual and visual sources. The creeping incursion into daily routines slowly habituates people to them, but if a particular incursion generates too much of an uproar, companies adapt by promising reforms or by occasionally paying fines. This, however, fails to check the ever-growing range of data collection, made possible through tools such as ambient computing, ubiquitous computing and the Internet of Things (IoT).

From monitoring, surveillance capitalism has now entered a new domain: behavioural control. Not only is data being constantly collected, it is being processed and fed back to trigger certain desired commercial outcomes. Cars can be made to break down in order to facilitate loan recoveries; a Pokémon player is directed close to a MacDonald’s outlet; advertisements are presented to individuals when they are emotionally vulnerable and most likely to respond impulsively. In Zuboff’s narrative, human beings are now essentially Pavlov’s dogs, punished by the regime of surveillance capitalism for ‘undesirable’ behaviour and rewarded for ‘desirable’ ones.

How has what Zuboff calls “digital dispossession” (humans being dispossessed of the control of their personal data) taken place? She argues that tech companies such as Google and Facebook have benefitted from an economic model that is based on libertarian notions of fundamental freedoms and a model that is deeply sceptical of regulation. They equally benefitted from the post 9/11 political milieu in the US, which accepted and allowed for exceptional levels of surveillance under the garb of fighting terrorism and protecting national security. Zuboff reminds us that long before Cambridge Analytica, tech companies were working closely with political campaigns (such as the Obama 2008 campaign) to build voter profiles and to advise on strategy.

Zuboff’s arguments powerfully remind the reader that they are a mere pawns within an elaborate system of social control driven by technological tools controlled by big corporate houses or by governments. She convincingly argues that privacy and consent have been rendered toothless in this new regime of surveillance capitalism. Efforts to foreground privacy and consent are doomed to fail.

Is it possible to “take back” big-data analytics from the state and from big business? How effectively would regulatory mechanisms work? While Zuboff expresses hope that this particular form of capitalism (which is product of a certain historic juncture) is at fault, her own arguments suggest otherwise. Big data analytics seem incompatible with democratic control and far more in sync with authoritarian control. As David Harvey suggests, socialism or democracy will need technologies which have mental conceptions of new social relations embedded in them unlike the current technologies which have the idea of surveillance-based social engineering deeply embedded in them. Machine intelligence is the very means of production in surveillance capitalism and therefore alternative means might have to emerge. Given the extent of compatibility between surveillance capitalism and digital technologies, it is difficult to imagine the existence of one without the other. Taking back the digital seems a difficult task, requiring the reconstitution and rebuilding of digital technologies themselves.

A version of this piece appeared as the article ‘Consent and Privacy in the Age of Digital Technology’ published by the India Forum, https://www.theindiaforum.in/article/consent-and-privacy-age-digital-technology.

Liberation Archive

- 2001-2010

-

2011-2020

- 2011

- 2012

- 2013

- 2014

- 2015

- 2016

- 2017

- 2018

- 2019

-

2020

- Liberation, JANUARY 2020

- Liberation, FEBRUARY 2020

- Liberation, MARCH 2020

-

Liberation, APRIL 2020

- Modi's Response To The Coronavirus Pandemic Is Making The Crisis Much Worse

- What The Pandemic Teaches Us

- China and The Coronavirus Pandemic: Lessons for Communists

- Capitalist Agriculture and Covid-19: A Deadly Combination

- 1984 to 2020: A Tale of Shared Loss and Injustice

- 'This Is Our Version of the Coronavirus. We Are Sick'

- IWD 2020: International Women's Day And the Shaheen Bagh Revolution

- The Countrywide Upsurge against CAA-NRC-NPR: What We have achieved and What Comes Next

- Ranjan Gogoi: CJI Yesterday, Partisan Politician Today

- Tamil Nadu Round Up

- North East Delhi Struggles To Recover and Heal

- Surveillance State and the 'New Frontiers' of Power

- Dr Shyam Bihari Rai

- Liberation, MAY-JUNE 2020

- Liberation, JULY 2020

- Liberation, AUGUST 2020

- Liberation, SEPTEMBER 2020

- Liberation, OCTOBER 2020

- Liberation, NOVEMBER 2020

- Liberation, DECEMBER 2020

- 2021-2030

Charu Bhawan, U-90, Shakarpur, Delhi 110092

Phone: +91-11-42785864 | Fax:+91-11-42785864 | +91 9717274961

E-mail: info@cpiml.org